Last updated: December 2025

The AI landscape is shifting from "chatbots" to "agents" that can actually do things. But how do you safely connect your existing backend systems—your microservices, databases, and APIs—to these agents? Enter the Model Context Protocol (MCP).

In this post, I'll break down the 5 Ws of MCP and show you a real-world code example of how to wrap your existing microservices into an MCP server.

The 5 Ws of MCP

1. WHAT is it?

MCP is an open protocol that standardizes how AI models interact with your data and tools. Think of it as USB for AI. Just as USB lets you plug any mouse into any computer, MCP lets you plug any data source (Slack, Postgres, your internal API) into any AI client (Claude, Cursor, etc.).

2. WHY use it?

The Problem: The "m-by-n" Complexity In a world without a standard protocol, connecting AI to data is exponential work. Imagine you want to connect 3 AI clients (Claude, ChatGPT, IDE Assistant) to 3 data sources (Google Drive, Linear, Postgres). You would need to build 9 separate integrations (3 clients * 3 sources). Each one requires maintenance, security audits, and updates.

The Solution: Connect Once, Run Anywhere MCP replaces this mess with a universal standard.

- You build ONE MCP server for your data source (e.g., "Postgres MCP Server").

- That single server immediately works with ALL MCP-compliant clients.

- If a new AI tool comes out tomorrow, it can instantly talk to your data without you writing a single line of code.

3. WHEN to use it?

Use MCP when you want to enable "Human-in-the-loop" agentic workflows. For example:

- Support: A support agent needs to look up a user's recent orders from your internal tool.

- DevOps: You want to ask your IDE to "restart the staging server" (safe, controlled access).

- Business Intelligence: You want to ask an LLM questions about data live in your SQL database.

4. WHERE does it sit?

It sits between the AI Client (the "host" application like Claude Desktop) and your Backend Services. It acts as a gateway or translation layer.

5. HOW does it work?

You run a lightweight server (Node.js, Python, Go) that defines:

- Resources: Data that can be read (like files or logs).

- Tools: Functions that can be called (like

refund_order(id)). - Prompts: Pre-written templates for the AI.

MCP vs. Service Registry

Use established patterns like Eureka or Consul for Machine-to-Machine discovery (Service A finding Service B).

Use MCP for Human-AI-Machine discovery. MCP doesn't just tell the AI "where" the service is; it tells the AI what the service can do (schema) and how to use it (intent). It provides the "context" that LLMs need to reason about your tools.

Real-World Example: The E-Commerce Gateway

Imagine you have a legacy system with these microservices:

UserService: REST API for user profiles.OrderService: gRPC service for order history.ProductService: GraphicQL API for inventory.

The Goal: Build an AI assistant that can answer: "Where is my order #999?" without hardcoding the logic into a chat bot.

The Solution: An MCP Server

We build a small MCP server that wraps these services.

(You can find the full source code for this demo here: GitHub: rnukala1982/mcp-microservices-demo)

Here is the core logic. Instead of exposing raw HTTP endpoints, we expose Tools:

// src/index.ts (Simplified)

// Tool: Check Order Status

server.tool(

"get-customer-orders",

{ userId: z.string() },

async ({ userId }) => {

// The MCP server calls your existing internal Microservice

const orders = await OrderService.getOrdersByUser(userId);

return {

content: [{ type: "text", text: JSON.stringify(orders) }],

};

}

);

Why this is powerful

- Zero Hallucination Risk (on Schema): The MCP protocol enforces strict typing. The AI knows exactly what arguments

get-customer-ordersneeds. - Security: The MCP server runs locally or inside your secure perimeter. You don't give the LLM your database credentials; you only give it access to specific tools.

- reusability: Update the tool definition once, and every agent connecting to it gets the new capability immediately.

Conclusion

MCP is the missing link for building agentic systems on top of brownfield architecture. You don't need to rewrite your monolith. You just need to give it a voice.

Check out the full Code Demo on GitHub

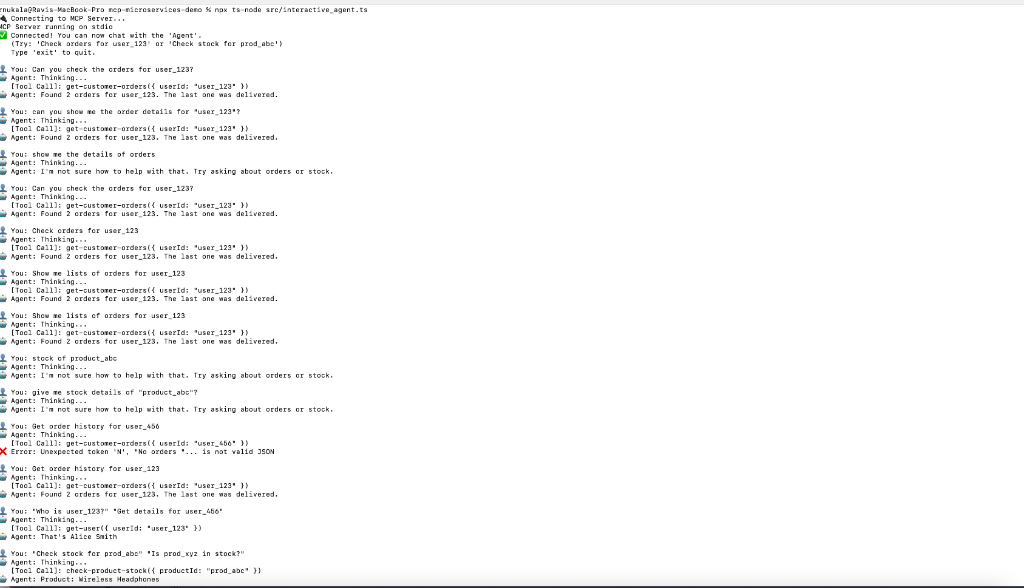

Interactive Agent Demo

Here is what it looks like when you chat with the agent in your terminal: